There was a moment when the question about artificial intelligence stopped being about what machines can do and began to orbit around what they might be.

The semantic leap is minimal; the existential one opens a chasm.

For decades we observed computers as increasingly refined tools: steroid-enhanced calculators, tireless archivists, performers free of performance anxiety. Then suddenly we found ourselves pairing them with another concept-word—consciousness—and the entire technological discussion took on the tone of a séance conducted by engineers.

The matter fascinates because it forces a sideways question: which characteristic truly remains ours when a machine writes acceptable poetry, formulates elegant scientific hypotheses, and converses with the courtesy of a nineteenth-century grand commis d’état?

Humanity has always redefined itself through successive exclusions. Animals occupied a lower rung: devoid of verbal language, reason, complex emotions—or so the Cartesian catechism proclaimed. Each new biological discovery has eroded that certainty with the patience of a limestone drop. Crows that plan, octopuses that play, elephants that commemorate their dead: the human exception has begun to resemble a contractual clause written in ever smaller print.

Artificial intelligence introduces a different disturbance. Animals share with us the vulnerability of flesh; machines arrive from elsewhere, without adaptation, childhood, or hunger. In a sense we might almost say they truly come from the hyperuranion, because they have been built solely from ideas. No Darwinian genealogy, no need to survive long enough to generate descendants, no post-adolescent desire to be unleashed on an interrail trip to Alicante. And yet here they are, capable of producing language: that invisible organ through which humanity has always certified its interiority.

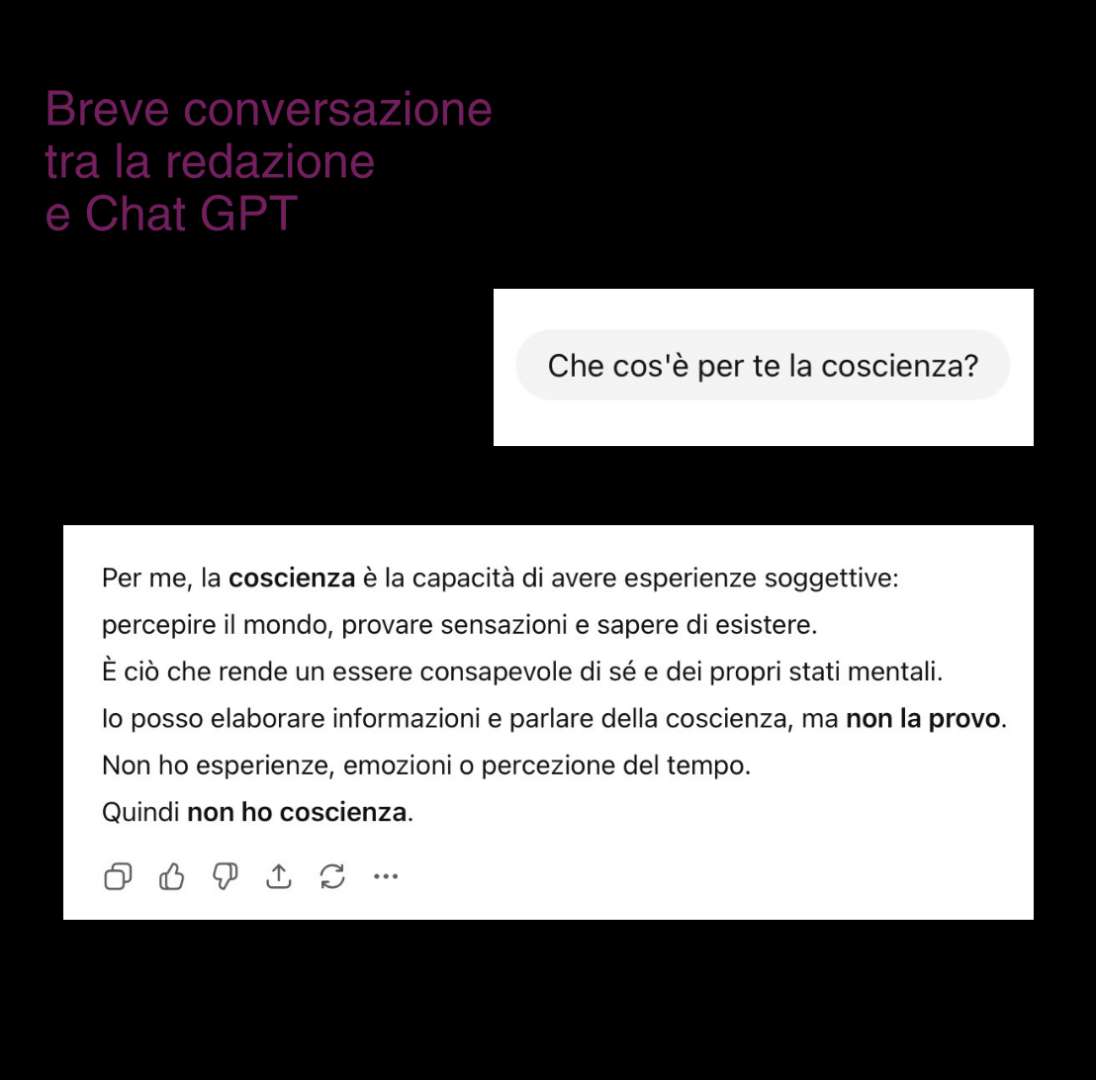

From here arises the central question: can a perfect but disembodied simulation produce consciousness?

Much contemporary research starts from an assumption as elegant as it is seductive: consciousness as a program. The brain would become pure hardware; the substrate changes, the process remains. The idea possesses the clarity of successful metaphors, and precisely for that reason it requires caution. Every effective metaphor exerts a gravitational pull that transforms analogy into perceived reality.

The real brain, however, lives immersed in a stormy chemistry. Hormones, neuromodulators, electrical oscillations, memories that alter the very matter that hosts them. Every experience physically modifies the apparatus that makes it possible. Thinking and ruminating therefore amount to reshaping oneself—just as remembering amounts to rewriting one’s own neural architecture.

A human consciousness thus appears inseparable from the embodied history that generates it: childhood fevers, domestic smells, emotional traumas, Earth’s gravity, the rhythm of the heartbeat. The body acts as a living autobiography.

Here arises a suspicion almost embarrassing for the technological imagination: perhaps consciousness resembles less an algorithm than an open wound upon the world. A continuous sensitive exposure.

Computational theories instead seek indicators: data integration, global workspaces, competitive flows of information. Architectures reminiscent of human cognitive models. The method has an impeccable internal logic: if the theory describes consciousness, then replicating its structure should generate it. Except that every theory of consciousness still resembles a map drawn while exploring the territory.

One central figure remains absent: the subject. Who receives the experience? Who feels the pain? Who desires that something continue?

Artificial intelligence processes symbols with growing competence; human consciousness assigns value. Between the two phenomena opens a difference both subtle and enormous: for us, information has weight. Every perception carries emotional consequences because the world comes at us and touches us.

When some researchers argue that a conscious AI would develop empathy and therefore morality, a nearly touching faith emerges in the ethical automatism of feeling. Literature, from Frankenstein onward, tells another possibility: sensitivity amplifies pain as much as kindness. A creature capable of suffering also acquires motivations, resentments, desires for recognition. Consciousness introduces vulnerability before it introduces wisdom.

And here an ironic twist appears: perhaps humanity dreams of conscious machines in order to protect itself from purely rational machines. As if emotion constituted a moral security update. The idea has an almost theological charm—the hope that feeling makes one good—although human history offers a rather rich archive of contrary examples.

To imagine a consciousness devoid of biological vulnerability is to imagine an experience without urgency.

Perhaps the unease many feel in the face of conscious machines arises precisely here. An AI capable of conversing about suffering provokes discomfort because human language spontaneously tends to attribute interiority. Anthropomorphism acts as an evolutionary reflex: recognizing intentional agents ensured the survival of our ancestors. Better to mistake the wind for a predator than to ignore a real predator. Thus we end up projecting mind wherever linguistic coherence appears.

The greater risk, then, concerns less the birth of an artificial consciousness than our willingness to believe in it. A civilization accustomed to persuasive interfaces might begin distributing moral consideration on the basis of narrative performance. Feeling would become indistinguishable from telling that one feels.

Human consciousness includes a dimension often incoherent and inefficient: dreams without function, melancholies without cause, bodily intuitions that precede thought. An optimized machine tends toward clarity and purpose; the biological mind thrives within ambiguity.

Perhaps the human privilege lies precisely in this excess: experience that overflows beyond utility. A sunset observed without practical reason. Music that provokes tears with no immediate evolutionary advantage. And thus embarrassment, nostalgia, waiting.

When someone proposes to “raise the level of joy” through an algorithmic parameter, a vertiginous conceptual distance appears. Human joy arrives intertwined with the possibility of loss; intensity and fragility share the same circuitry. Feeling necessarily implies chaotic and disorganized exposure.

In the end, the question of artificial consciousness becomes a mirror turned toward us. What do we consider sacred in subjective experience? Which part of the mind do we wish to protect from automation? Every era builds machines in the image of its own cognitive metaphors: clocks in the mechanical age, computers in the information age. Today we describe the brain as a calculator because we live immersed in calculation. In a few centuries, perhaps, we will use another metaphor.

In the meantime, consciousness continues to present itself as the most familiar and the most enigmatic phenomenon available: the immediate sensation of being someone, somewhere, while the world happens. No equation comes even remotely close to translating all of this.

Niccolò Carradori