The recent revolution in generative artificial intelligence is now fully underway, and the way we produce music is bound to change radically. When software such as Suno began to appear on the market, the dominant reaction was fear: many assumed that the work of musicians might gradually become obsolete.

Alongside these more alarmist views, however, a different interpretation emerged almost immediately. According to others, generative artificial intelligences would not replace the music producer, but would instead take on an ancillary role, positioning themselves as a new class of tools available to musicians. In this scenario, the producer remains a central figure, but now has access to technologies capable of simplifying certain stages of the creative process or providing support during moments of compositional block.

That future is already here. And, at least for now, events seem to prove the latter group right, given that Ableton’s software (Live) could begin to integrate generative AI in a structural way starting with the next version.

But let us proceed in order. Much has already been said about Suno: almost all of us have had the chance to experience its ability to generate tracks in different styles starting from a simple text prompt, an ability that even extends to setting lyrics provided directly by the user to music. Despite these possibilities, however, the tracks produced by the platform have not achieved truly autonomous circulation: in most cases, that artificial quality is immediately apparent, a kind of soulless sheen that makes the listening experience recognizable and, in the long run, not very engaging. Where Suno has found fertile ground instead is in the context of viral production: meme songs, parodies, and nonsense pieces designed to accompany so-called “slop” videos circulating on social media.

One of the applications that seems to be attracting the most interest, however, concerns not so much the complete generation of songs as timbre transfer. These are technologies capable of transforming an audio input—for example, a voice—into something different: a drum, a violin, or another voice with different timbral characteristics. In other words, it is not so much about “writing music in place of the user” as it is about transforming material that already exists, often recorded by the producer themselves.

This kind of technique did not emerge today. In academic music research, for example, timbre transfer has been studied for years in institutions such as IRCAM in Paris, one of the world’s leading research centers for electronic music and computer music. It was precisely in that context that systems based on neural networks such as RAVE were developed, used by composers and sound designers to manipulate and transform audio signals in real time. I had also discussed these technologies in a previous article published in The Bunker.

At the same time, another trend is emerging: plugins and production environments that are increasingly integrated with artificial intelligence systems. A significant example is Co-Producer, developed by the California-based company Output and presented in 2025. Rather than generating music from scratch, Co-Producer works as a kind of creative assistant within the DAW: the plugin “listens” to the project, analyzes its rhythm and harmony, and suggests compatible samples from a large integrated sound library.

Among its most interesting features is Re-Imagine, which makes it possible to generate potentially endless variations of the same sample, modifying its rhythmic or timbral characteristics to obtain ever different versions. The idea behind it is relatively simple: many producers use the same sample platforms (for example, Splice) and therefore end up drawing from the same sounds. The result is a certain homogenization of productions. Tools such as Re-Imagine attempt to break this cycle, allowing users to start from a familiar sample and generate alternative, more personal versions.

Naturally, one might object that the repetition of samples has not always been perceived as a problem. In many cases, on the contrary, certain samples have become true stylistic markers, helping to define entire musical genres. Think, for example, of certain drum machines in house or techno music, or the vocal loops and percussion typical of funk carioca and its more recent evolutions such as Brazilian phonk: recognizable sounds that become an integral part of a genre’s identity. In this sense, the standardization of samples is not necessarily a limitation, but can become an element of cultural recognizability.

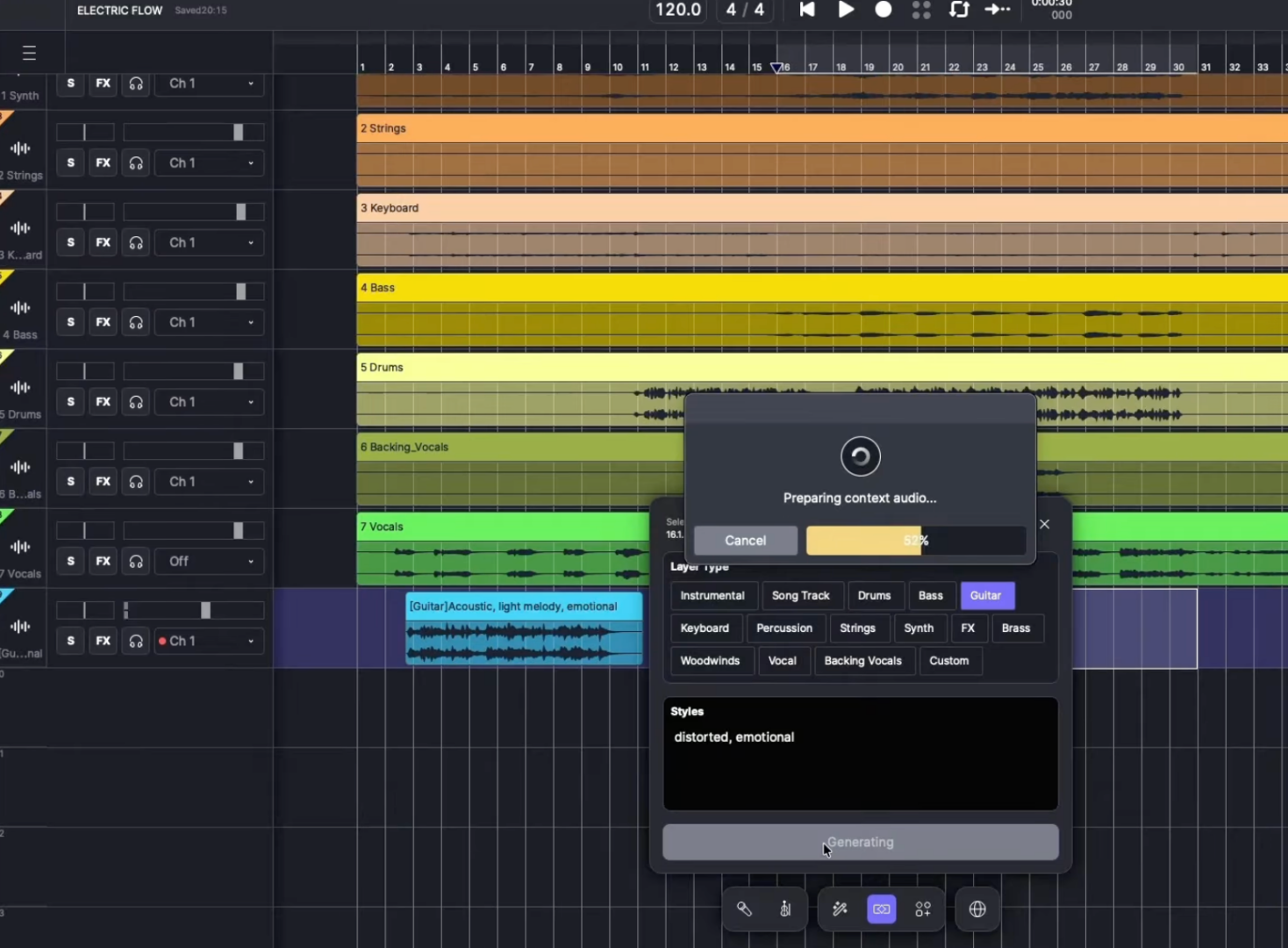

If Co-Producer represents a first step toward the integration of artificial intelligence into traditional music production, other platforms seem to be going even further. This is the case with ACE Studio, developed by Suno AI itself. Here artificial intelligence is not limited to assisting the producer in the search for sounds, but instead enters directly into the creation phase: the system allows users to generate instrumental or vocal parts from text prompts, while automatically maintaining coherence with parameters such as BPM, key, and the structure of the production. In practice, the DAW is increasingly becoming an environment of co-creation between user and generative model.

It is perhaps also in light of these transformations that some of the sector’s historic companies are beginning to move. In recent months, for example, a move by Ableton—the company behind the famous DAW Ableton Live—has sparked discussion. In January, the company published a job posting for the position of Senior Machine Learning Research Engineer, a rather clear sign of its interest in integrating artificial intelligence systems into its software ecosystem.

The news has fueled much speculation in the producer community. On the one hand, Ableton has always been perceived as a tool strongly oriented toward manual control and experimentation; on the other, the explicit addition of machine-learning expertise suggests that even such a well-established platform is beginning to reflect on the role AI will play in music production in the near future.

And perhaps this is where the most interesting issue lies: not so much in replacing the musician, but in redefining the figure of the producer, increasingly accompanied—or, to use a term that has now become common, supported—by an artificial “co-producer.”

Pierluigi Fantozzi